The Technical SEO Audit: A Systematic Approach to Site Health and Performance

When a prospective client asks what a top-tier SEO agency actually delivers, the answer rarely begins with rankings. It begins with crawlability. Before any keyword can surface in search results, a search engine must first discover the page, render its content, and index it correctly. A technical SEO audit is the diagnostic process that ensures this pipeline functions. Without it, on-page optimization and content strategy operate on a broken foundation.

This article provides a practical checklist for evaluating and improving technical SEO health—covering crawl budget management, Core Web Vitals, duplicate content resolution, and the structural elements that determine whether your site is search-engine-ready. It is written for marketing leads, product managers, and agency stakeholders who need to brief an SEO team or run an internal audit with precision.

Understanding Crawl Budget and Crawlability

Search engines allocate a finite crawl budget to each domain. This budget determines how many pages a crawler will request per session and how much server resources it will consume. For large sites—e-commerce catalogs, news archives, enterprise portals—mismanaged crawl budget means critical pages go undiscovered while low-value URLs (session IDs, filter parameters, pagination loops) consume the allocation.

The first step in any technical audit is to verify that search engines can reach your content efficiently. This involves three core checks:

- Review robots.txt – Ensure it does not inadvertently block important sections. Common mistakes include disallowing `/css/` or `/js/` (which can block rendering), or blocking entire directories like `/blog/`.

- Inspect XML sitemaps – Confirm that sitemaps list only canonical, indexable URLs. Exclude paginated pages, parameter-heavy URLs, and pages blocked by `noindex` directives.

- Analyze server logs – Identify which user-agent (Googlebot, Bingbot) is crawling, how often, and which response codes (200, 301, 404, 500) are returned. A high volume of 404s or redirect chains wastes budget.

Core Web Vitals: Beyond the Buzzwords

Core Web Vitals (LCP, CLS, FID/INP) are not optional metrics. They are ranking signals embedded in Google’s page experience algorithm. Yet many audits treat them as a checkbox exercise—run a Lighthouse report, note the score, move on. A rigorous analysis digs deeper.

Largest Contentful Paint (LCP) measures loading performance. The target is under 2.5 seconds. Common causes of poor LCP include uncompressed images, render-blocking JavaScript, and slow server response times (TTFB). Fixes involve image optimization (WebP, lazy loading), deferring non-critical scripts, and implementing CDN caching.

Cumulative Layout Shift (CLS) measures visual stability. A CLS score below 0.1 is good. Layout shifts often stem from dynamically injected ads, images without explicit dimensions, or web fonts that load asynchronously. The fix is to reserve space for all media and set explicit width/height attributes.

Interaction to Next Paint (INP) replaces First Input Delay as of March 2024. It measures responsiveness to user interactions. Poor INP is typically caused by long tasks on the main thread—heavy JavaScript execution, inefficient event handlers, or third-party scripts. Optimization requires code splitting, debouncing input handlers, and auditing third-party tags.

| Metric | Target | Common Culprit | Primary Fix |

|---|---|---|---|

| LCP | ≤ 2.5s | Unoptimized hero images | Preload LCP element, serve WebP, reduce TTFB |

| CLS | ≤ 0.1 | Ads without reserved space | Set width/height on all media, use `aspect-ratio` |

| INP | ≤ 200ms | Long main-thread tasks | Defer non-critical JS, split bundles, lazy-load below-fold |

Resolving Duplicate Content and Canonicalization

Duplicate content is not a penalty—it is a dilution of ranking signals. When multiple URLs serve identical or near-identical content, search engines must choose which version to index. They may pick the wrong one, or worse, index none if the duplicates are too numerous.

The primary tool for consolidation is the canonical tag (`rel="canonical"`). This tag tells search engines: "Treat this URL as the master copy." However, canonical tags are advisory, not directive. If internal linking contradicts the canonical (e.g., linking to parameterized URLs instead of the canonical), the tag may be ignored.

A technical audit should identify:

- URL parameters that generate duplicate pages (e.g., `?sort=price`, `?color=red`)

- WWW vs. non-WWW and HTTP vs. HTTPS versions not properly redirected

- Trailing slash inconsistencies (e.g., `/page/` vs `/page`)

- Syndicated content that lacks a canonical pointing back to the original source

On-Page Optimization: Beyond Meta Tags

On-page optimization is often reduced to keyword-stuffed title tags and meta descriptions. A modern approach treats it as a holistic alignment between content, user intent, and technical structure.

Keyword research must go beyond search volume. It requires intent mapping—classifying queries into informational, navigational, commercial, or transactional categories. A page optimized for a transactional query ("buy running shoes size 10") should not be the same as one targeting an informational query ("how to choose running shoes"). The content, internal links, and call-to-action must reflect the intent.

Content strategy then determines which pages exist, how they interlink, and whether they satisfy the user's journey. A common error is creating thin content—pages with 200 words and no unique value—just to target a long-tail keyword. Google's Helpful Content System penalizes such pages.

The audit checklist for on-page optimization includes:

- Title tags – Unique, descriptive, under 60 characters, primary keyword near the front

- Meta descriptions – Persuasive, under 160 characters, includes call-to-action

- Header structure (H1–H6) – Single H1 per page, hierarchical nesting, keyword placement in H2s

- Image alt text – Descriptive, not keyword-stuffed; essential for accessibility

- Internal linking – Relevant anchor text, links to authority pages, no orphan pages

- Schema markup – Product, FAQ, Article, BreadcrumbList as appropriate

Link Building: Risk-Aware Acquisition

Link building remains a high-impact ranking factor, but the methods matter enormously. A top-tier agency does not buy links from private blog networks (PBNs) or participate in link exchanges. These black-hat tactics generate artificial link profiles that Google’s SpamBrain algorithm detects with increasing accuracy. The result is not a ranking boost—it is a manual action or algorithmic devaluation.

A responsible backlink profile strategy focuses on:

- Earned links – Through original research, data-driven content, or expert commentary

- Digital PR – Securing mentions from reputable publications

- Broken link building – Replacing dead links on relevant resource pages with your content

- Competitor gap analysis – Identifying where competitors earn links that you do not

- Links from irrelevant or low-quality domains

- Over-optimized anchor text (exact match keywords across many links)

- Sudden spikes in link velocity

- Links from sites that also link to known spam

Running the Audit: A Practical Sequence

A technical SEO audit is not a single report—it is a process. Follow this sequence to ensure nothing is missed:

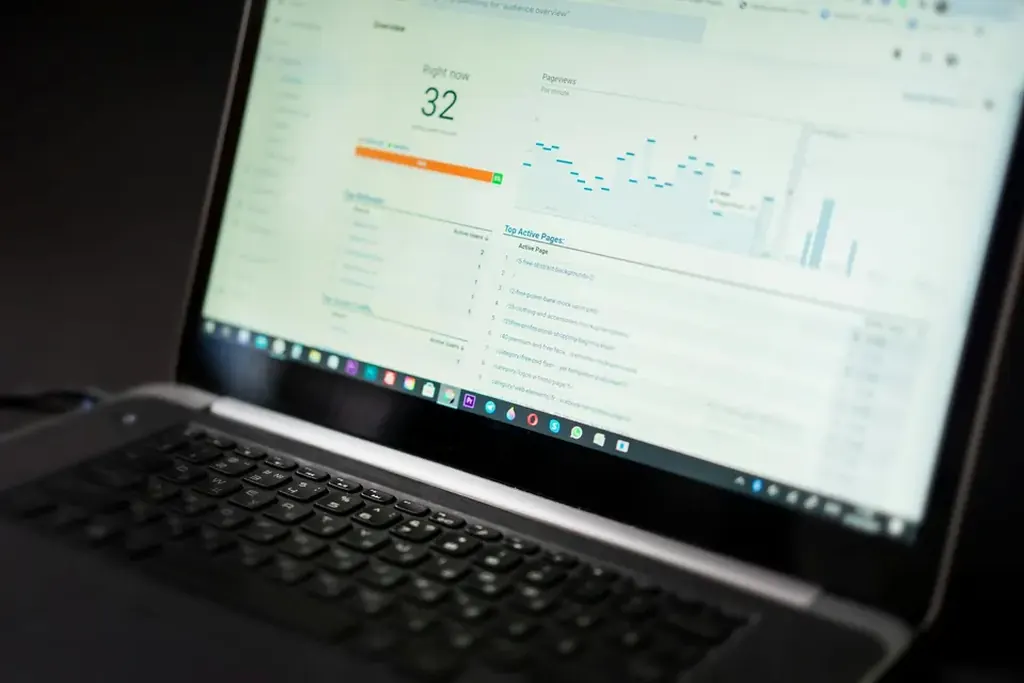

- Crawl the site – Use a tool like Screaming Frog or Sitebulb. Export all URLs, response codes, meta tags, and internal links.

- Analyze server logs – Identify crawl patterns, error rates, and wasted budget.

- Check Core Web Vitals – Use CrUX (Chrome User Experience Report) for field data, Lighthouse for lab data.

- Review robots.txt and sitemaps – Validate directives and coverage.

- Identify duplicate content – Use the crawl data to find identical or near-identical pages.

- Audit on-page elements – Check title tags, meta descriptions, headers, and schema.

- Evaluate backlink profile – Use Ahrefs or Majestic to assess quality and risk.

- Prioritize fixes – Rank issues by impact (crawlability > indexation > on-page > links).

Summary: What a Top-Tier Agency Delivers

A professional SEO agency does not promise instant rankings or guaranteed first-page positions. It delivers a methodical, data-driven process: a technical audit that identifies crawl barriers, Core Web Vitals optimizations, duplicate content resolution, and a link building strategy built on earned, not bought, signals. The output is a prioritized roadmap—one that respects the site's architecture, the search engine's constraints, and the user's intent.

For more on how to evaluate an agency's technical capabilities, see our guide on choosing an SEO partner. To run your own audit, start with crawl budget optimization and Core Web Vitals diagnostics.

Reader Comments (0)