Technical SEO & Site Health: A Practitioner’s Checklist for Agency Engagement

When your organic traffic plateaus or a site migration produces unexpected ranking drops, the root cause is almost never “Google hates us.” In many audits I’ve reviewed, the culprit is a foundational technical issue—crawl waste, canonical misconfiguration, or unoptimized Core Web Vitals. This article walks you through the essential checks your SEO agency should be performing, the risk areas that demand scrutiny, and how to brief campaigns that actually move performance metrics.

1. Crawl Budget & Indexation: The Foundation

Search engines allocate a finite crawl budget to each domain. If your site has 50,000 URLs but only 2,000 contain unique, valuable content, Googlebot will waste the majority of its visits on thin pages, parameter-based duplicates, or soft-404 errors. The result? Critical product pages or cornerstone articles may remain uncrawled for weeks.

Your agency should audit:

- Crawl rate vs. crawl demand: Compare the number of URLs Googlebot attempts to crawl daily against the total indexable URLs. A large gap suggests either crawl budget exhaustion or a robots.txt directive blocking important sections.

- Log file analysis: Server logs reveal which URLs Googlebot actually visited, how often, and with what response codes. If you see repeated 301 redirects or 404s on high-value pages, that’s a red flag.

- XML sitemap hygiene: Each sitemap should contain only canonical, indexable URLs. Remove pages with noindex tags, redirects, or thin content. Use multiple sitemaps (e.g., products, blog, categories) and reference them in robots.txt.

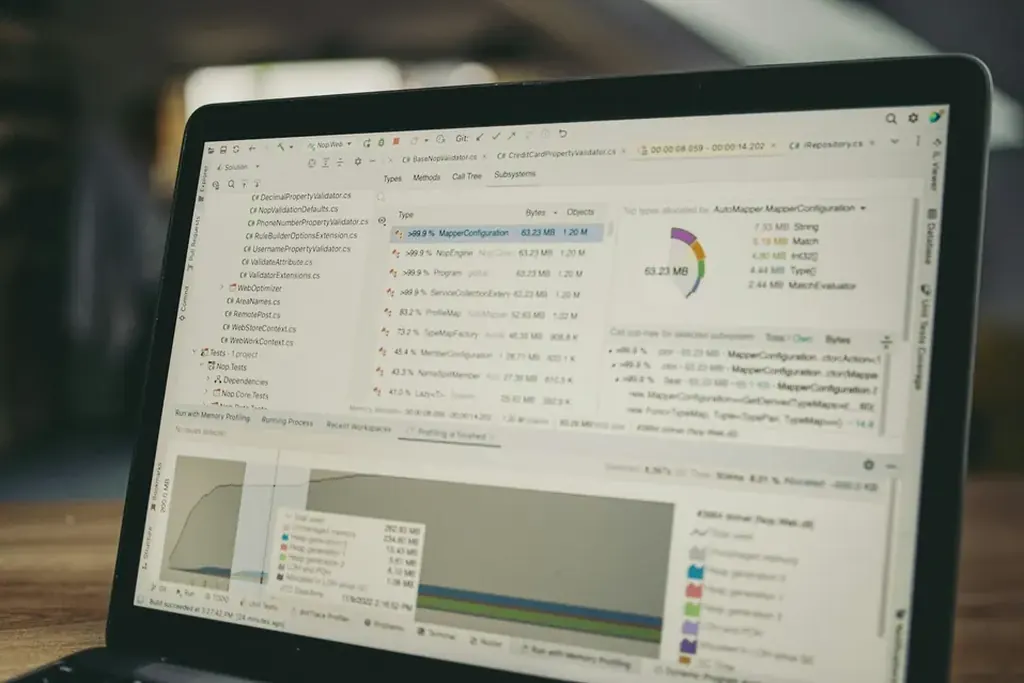

2. Core Web Vitals & Performance Metrics

Google’s page experience signals now include Interaction to Next Paint (INP) as a Core Web Vital, replacing First Input Delay. Your agency must measure real-user data (Chrome User Experience Report) alongside lab data (Lighthouse, PageSpeed Insights). A perfect Lighthouse score does not guarantee good CrUX data.

Key metrics to track:

| Metric | Target | Common Failure Cause |

|---|---|---|

| Largest Contentful Paint (LCP) | ≤ 2.5 seconds | Unoptimized hero images, slow server response time, render-blocking resources |

| Cumulative Layout Shift (CLS) | ≤ 0.1 | Ads or embeds without reserved dimensions, web fonts causing FOIT/FOUT |

| Interaction to Next Paint (INP) | ≤ 200 ms | Heavy JavaScript execution, long tasks on main thread, third-party scripts |

What to ask your agency:

- Are they using a Real User Monitoring (RUM) tool (e.g., RUMvision, Google’s Web Vitals library) to track field data?

- Do they have a process for prioritizing fixes—for example, addressing LCP before CLS if the LCP element is a slow-loading hero image?

- Have they audited third-party scripts (analytics, chat widgets, heatmaps) that may block the main thread?

3. Duplicate Content & Canonicalization

Duplicate content dilutes ranking signals and wastes crawl budget. The most common sources are URL parameters (sort, filter, tracking), HTTP vs. HTTPS versions, and product variations with identical descriptions.

Your agency should implement:

- Canonical tags with absolute URLs: Each page should have a self-referencing canonical unless it’s a syndicated or filtered version. Avoid relative URLs in `<link rel="canonical">`.

- Redirect strategy for parameter-based duplicates: Use Google Search Console’s URL Parameters tool to tell Google how to handle specific parameters (e.g., `?sort=price` should be ignored). For e-commerce, consider a canonical chain: filter page → category page → product page.

- Hreflang tags for international sites: If you serve multiple languages, ensure each language variant has a reciprocal hreflang annotation. A missing `x-default` tag can cause the wrong version to appear in search results.

- Crawl the entire site with a tool like Screaming Frog or Sitebulb.

- Export all pages with duplicate or missing canonical tags.

- Identify any page where the canonical points to a different domain (cross-domain canonicalization is rare but valid for syndicated content).

- Verify that paginated pages (`/page/2/`) use `rel="next"` and `rel="prev"` or, better, a view-all version with a self-referencing canonical.

4. On-Page Optimization: Beyond Meta Tags

On-page SEO is often reduced to stuffing keywords into title tags and H1s. A mature approach involves intent mapping: understanding whether a query signals informational, navigational, commercial, or transactional intent, then structuring content accordingly.

Your agency should deliver:

- Keyword research with intent clusters: Group target keywords by funnel stage. For example, “how to fix leaky faucet” (informational) requires a step-by-step guide, while “plumber near me” (transactional) needs a local landing page with reviews and a click-to-call button.

- Content gap analysis: Compare your site’s topical coverage against competitors. If a competitor ranks for “best SEO tools for small business” with a listicle, and you only have a blog post about “SEO basics,” you have a gap.

- Structured data markup: Implement schema.org types relevant to your content—Article, Product, FAQ, HowTo, LocalBusiness. Use Google’s Rich Results Test to validate.

5. Link Building: Quality Over Quantity

A backlink profile is not a number—it’s a trust signal. A single link from a high-authority, relevant site (e.g., a .edu resource page) can outweigh hundreds of low-quality directory links. However, many agencies still sell “link packages” that rely on private blog networks (PBNs) or paid links, which violate Google’s Webmaster Guidelines.

What to look for in a link building campaign:

| Approach | Risk Level | Typical Outcomes |

|---|---|---|

| Guest posting on relevant industry blogs | Low | Gradual authority growth; requires editorial oversight |

| Broken link building (replacing dead resources) | Low | High acceptance rate; valuable for .gov/.edu domains |

| Digital PR (data-driven stories, surveys) | Medium | Fast visibility; requires a compelling angle |

| PBN links or paid links | High | Short-term ranking boost followed by manual action penalty |

Your agency should provide:

- A link prospect list with Domain Authority (DA) and Trust Flow (TF) metrics, but more importantly, relevance to your niche.

- A disavow file for toxic links discovered during the audit. Do not disavow indiscriminately—only links that are clearly spammy or from penalized sites.

- A monthly report showing new links acquired, lost links, and anchor text distribution. Watch for over-optimized anchor text (e.g., “best SEO agency” repeated on 80% of links).

6. Technical SEO Audit: The Process

A comprehensive technical audit should be run quarterly, not annually. Here’s the step-by-step process your agency should follow:

- Crawl the site with a tool that supports JavaScript rendering (e.g., Screaming Frog with headless Chrome, Sitebulb, or DeepCrawl).

- Check for critical errors: 5xx server errors, 404 pages with high traffic, missing meta descriptions, duplicate title tags.

- Analyze internal linking: Identify orphan pages (no internal links), excessive link depth (more than 4 clicks from homepage), and broken internal links.

- Review indexation status: Compare the number of URLs in the XML sitemap vs. indexed URLs in Google Search Console. If the gap exceeds 20%, investigate.

- Test mobile usability: Use Google’s Mobile-Friendly Test. Common issues: text too small to read, tap targets too close, viewport not set.

- Validate structured data: Run each page type through Google’s Rich Results Test. Fix errors like missing `@id` or incorrect property types.

7. Briefing Your Agency: A Checklist for Success

To get the most out of your SEO agency, you need to brief them clearly. Here’s a checklist to use before any campaign kickoff:

- Define the primary goal: Is it organic traffic growth, conversion rate improvement, or brand visibility? Each requires a different focus (e.g., traffic = content expansion, conversions = technical UX fixes).

- Provide access: Google Search Console, Google Analytics, server logs, CMS admin, and any third-party tools (Ahrefs, SEMrush, Moz).

- Share business context: Upcoming product launches, site migrations, seasonal trends, competitor moves.

- Set realistic timelines: Core Web Vitals improvements take 4–8 weeks to reflect in CrUX data. Link building results are visible in 3–6 months.

- Define reporting cadence: Monthly dashboards with key metrics (organic sessions, keyword rankings, backlink growth) plus a quarterly deep-dive audit.

- Establish escalation rules: Who approves critical changes (e.g., robots.txt edits, 301 redirect maps)? What’s the SLA for fixing a broken canonical or a 500 error?

Summary

Technical SEO is not a one-time fix—it’s a continuous process of monitoring, testing, and refining. The agencies that deliver lasting results focus on crawl efficiency, Core Web Vitals, canonical hygiene, and quality link acquisition. Avoid any partner that promises unrealistic outcomes or sells black-hat link packages. Instead, look for transparency in reporting, a data-driven audit process, and a willingness to explain the “why” behind each recommendation.

For more on site health fundamentals, read our guide on crawl budget optimization.

Reader Comments (0)