SEO Services Agency: Expert Technical Audits, On-Page Optimization & Site Performance

When a website underperforms in organic search, the instinct is often to blame content or backlinks. Yet, in the majority of cases, the root cause lies deeper—in the architecture of the site itself. An SEO services agency that prioritizes technical audits and on-page optimization operates from a premise that many overlook: search engines must first be able to find, crawl, and understand a page before any ranking signal can be evaluated. Without this foundation, even the most compelling content and strongest link profile will yield diminishing returns. The reality is that technical SEO is not a set of optional enhancements; it is the bedrock upon which all other SEO activities depend. This article examines the core components of a rigorous technical SEO program, from crawl budget management to Core Web Vitals optimization, and explains why a methodical, skeptical approach to site performance is the only path to sustainable organic growth.

The Technical SEO Audit: Diagnosing What Search Engines Actually See

A technical SEO audit is not a checklist of superficial fixes. It is a forensic examination of how search engine bots interact with a website's infrastructure. The audit begins with an analysis of crawlability—whether search engines can access all important pages without encountering errors, redirect chains, or blocked resources. A common finding in audits conducted by experienced agencies is that a significant portion of a site's indexable content is either inaccessible or misconfigured. For instance, JavaScript-heavy sites often fail to render critical content for Googlebot, resulting in pages being treated as thin or empty. Similarly, improper use of the `robots.txt` file can inadvertently block entire sections of a site that contain valuable content.

The audit then moves to indexation. Here, the focus shifts to duplicate content, canonical tags, and sitemap accuracy. Duplicate content is not a penalty in the traditional sense, but it dilutes ranking signals across multiple URLs, making it harder for any single version to gain authority. An effective technical audit identifies patterns of duplication—whether from URL parameters, session IDs, or printer-friendly versions—and implements a canonicalization strategy that consolidates signals to the preferred URL. The XML sitemap, often treated as a formality, must be dynamically updated to reflect only indexable, canonical URLs. Stale sitemaps that include redirecting pages or noindex tags waste crawl budget and mislead search engines about a site's true structure.

Crawl Budget Allocation: Prioritizing What Matters Most

For large websites—e-commerce platforms, news publishers, or enterprise portals—crawl budget is a finite resource. Googlebot allocates a certain number of requests per day based on site authority, update frequency, and server response times. If a site has thousands of low-value URLs (such as filtered product pages, paginated archives, or thin affiliate pages), those pages consume crawl budget that could otherwise be spent on high-priority content. An SEO agency that understands crawl budget dynamics will conduct a crawl ratio analysis, comparing the number of URLs discovered versus those actually indexed. Discrepancies here often point to structural issues: orphan pages that no internal link points to, or massive parameter-generated URLs that create infinite spaces for bots to explore.

The solution involves a combination of technical controls and strategic pruning. `robots.txt` directives can block low-value sections, while `noindex` tags prevent indexing without blocking crawling. However, the most effective approach is to improve internal linking architecture so that important pages receive more link equity and, consequently, more frequent crawling. This is where the intersection of technical SEO and content strategy becomes critical: a well-structured site with clear hierarchical navigation signals to search engines which pages are authoritative and deserve regular re-crawling.

Core Web Vitals and Site Performance: The User Experience Imperative

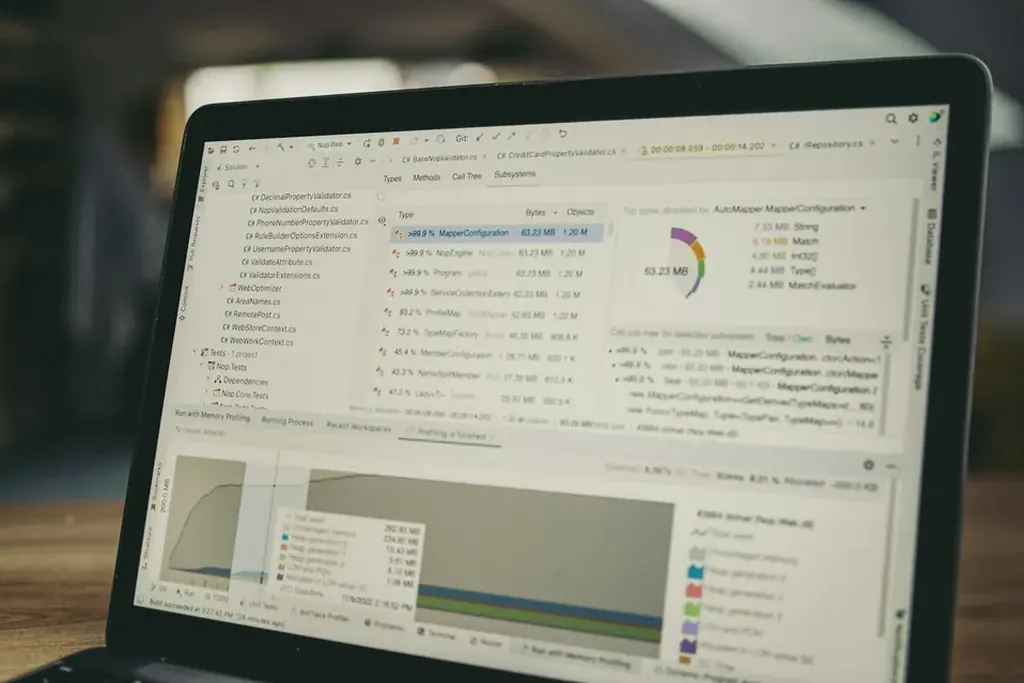

Google’s Core Web Vitals—Largest Contentful Paint (LCP), Interaction to Next Paint (INP, replacing FID), and Cumulative Layout Shift (CLS)—are not merely metrics to monitor; they are direct indicators of user experience that now influence search rankings. An SEO agency that treats performance optimization as a one-time project rather than an ongoing discipline will see gains erode as new content and features are added. The challenge is that Core Web Vitals are affected by a wide range of factors including server response times, image optimization, third-party scripts, font loading, and DOM size.

A methodical performance audit begins with real-user monitoring (RUM) data, not lab-based simulations. Lab data from tools like Lighthouse provides controlled conditions, but RUM data from the Chrome User Experience Report (CrUX) reveals how actual users experience the site across different devices and network conditions. An agency that relies solely on lab scores risks optimizing for conditions that do not reflect reality. For example, a site might achieve a perfect LCP score in a simulated desktop environment but fail dramatically on mobile devices with slower 3G connections. The fix often involves implementing resource hints (`preload`, `preconnect`, `prefetch`), lazy-loading non-critical assets, and optimizing Web Font loading to minimize render-blocking.

On-Page Optimization: Beyond Meta Tags

On-page optimization has evolved far beyond keyword-stuffed title tags and meta descriptions. Modern on-page SEO, as practiced by expert agencies, is a discipline that integrates semantic relevance, structured data, and user intent mapping. The goal is not to rank for a single keyword but to satisfy the full spectrum of search intents—informational, navigational, commercial, and transactional—that a topic encompasses. This requires a content strategy that maps keywords to specific stages of the user journey, then optimizes each page’s structure, headings, internal links, and multimedia elements accordingly.

A critical but often neglected aspect of on-page optimization is the use of schema markup. Structured data allows search engines to understand the context of content—whether it is a product, article, FAQ, event, or recipe. Properly implemented schema can enable rich results such as featured snippets, carousels, and knowledge panels, which increase click-through rates even if the page does not rank first. However, schema must be accurate and representative of the content. Misleading markup, such as marking a general blog post as an FAQ without actual questions and answers, can lead to manual actions or loss of rich result eligibility.

Keyword Research and Intent Mapping: The Data-Driven Foundation

Keyword research in a professional SEO agency setting is not about generating a list of high-volume terms. It is a systematic process of identifying search queries that align with business objectives, assessing their competitive landscape, and mapping them to pages that can realistically satisfy the intent. The distinction between head terms (high volume, high competition) and long-tail queries (lower volume, higher conversion potential) is well known, but the nuance lies in understanding the intent behind each query. A user searching "best running shoes for flat feet" is in a commercial investigation phase, not ready to buy but comparing options. A page optimized for this query should include comparison tables, expert reviews, and clear product recommendations—not a generic product category page.

Intent mapping also informs content strategy for informational queries. Many sites attempt to rank for "how to" or "what is" queries with thin, superficial content that fails to demonstrate expertise. An agency that conducts thorough keyword research will identify opportunities to create comprehensive guides that cover subtopics, answer related questions, and link to deeper resources. This approach not only improves rankings for the primary query but also establishes topical authority, which search engines reward with broader visibility across related searches.

Link Building and Backlink Profile Management: Quality Over Quantity

Link building remains a critical ranking factor, but the methodology has shifted dramatically. The era of mass directory submissions, comment spam, and private blog networks is over. Modern link building, as practiced by reputable SEO agencies, focuses on earning links through digital PR, content partnerships, and resource-based outreach. The key metric is not the raw number of backlinks but the quality and relevance of the linking domains. A single link from a high-authority, thematically relevant site can have more impact than hundreds of low-quality links from unrelated directories.

Backlink profile management involves continuous monitoring for toxic links—those from spammy sites, link farms, or penalized domains. While Google’s algorithms are increasingly adept at ignoring such links, a manual action can still occur if a site engages in aggressive link schemes. An agency that prioritizes link building over backlink profile hygiene is taking unnecessary risk. Regular audits using tools that assess domain authority, trust flow, and link velocity allow agencies to disavow harmful links proactively. The goal is a natural link profile that grows organically over time, reflecting genuine endorsement from authoritative sources.

Risks and Limitations: The Reality of SEO Outcomes

No SEO agency can guarantee rankings, traffic, or revenue. The search landscape is subject to frequent algorithm updates, competitor actions, and shifts in user behavior. A site that performs well today may see its rankings decline tomorrow due to a Google core update that changes how relevance is evaluated. Moreover, technical SEO fixes can take weeks or months to manifest in improved rankings, and even then, the impact depends on factors outside the agency’s control, such as the site’s historical authority, industry competition, and content quality.

A responsible agency communicates these limitations clearly. They set realistic expectations based on data, not promises. They provide transparent reporting that shows progress in crawl efficiency, indexation rates, Core Web Vitals scores, and keyword visibility—not fabricated traffic projections. And they build contingency plans for algorithm changes, ensuring that the site’s technical foundation is robust enough to withstand volatility.

Summary

An SEO services agency that delivers lasting value operates at the intersection of technical precision and strategic foresight. The process begins with a comprehensive technical audit that uncovers crawl and indexation issues, followed by performance optimization that improves user experience and search rankings simultaneously. On-page optimization and keyword research are not isolated activities but components of a unified content strategy that aligns with user intent. Link building and backlink profile management require ongoing vigilance, prioritizing quality over quantity. And throughout, the agency must maintain a skeptical, data-driven approach, acknowledging that SEO outcomes are influenced by factors beyond its control. For businesses seeking sustainable organic growth, the choice is not between technical SEO and content marketing—it is between a fragmented approach and a disciplined, integrated program that treats the entire site as a ranking signal.

Reader Comments (0)