How to Evaluate and Brief an Expert SEO Agency: A Technical Checklist for Site Health and Performance

You have identified a gap in your organic visibility and are considering engaging an SEO agency. The decision is not trivial: a poorly executed technical audit can waste months of crawl budget, while a misguided link-building campaign can trigger a manual penalty that takes years to reverse. This checklist is designed for marketing managers and technical leads who need to brief an agency with precision, distinguish genuine expertise from surface-level promises, and establish a measurable foundation for site health and performance.

1. Define the Scope of the Technical SEO Audit

Before any outreach or content planning, the agency must conduct a comprehensive technical audit. This is not a one-time report; it is the diagnostic phase that uncovers crawl inefficiencies, indexing gaps, and performance bottlenecks. A credible agency will treat the audit as a living document, updated as search engine algorithms evolve.

Key deliverables you should expect from the audit:

- Full crawl analysis using enterprise-grade tools (e.g., Screaming Frog, DeepCrawl, or custom crawlers)

- Identification of crawl budget waste (e.g., infinite parameter URLs, soft 404s, thin content pages)

- Assessment of Core Web Vitals (LCP, CLS, FID/INP) with field data from Chrome User Experience Report (CrUX)

- Evaluation of XML sitemap structure and robots.txt directives

- Detection of duplicate content and canonical tag misconfigurations

- The agency guarantees first-page rankings for competitive terms within 30 days

- The audit report contains no raw data or tool outputs—only summarized opinions

- They recommend immediate link building without first fixing technical issues

| Issue | Impact on Crawl & Index | Typical Cause |

|---|---|---|

| Missing or incorrect canonical tags | Indexation of duplicate content, diluted link equity | CMS misconfiguration, URL parameter handling |

| Blocked resources in robots.txt | Incomplete rendering, poor Core Web Vitals assessment | Overly restrictive disallow directives |

| Excessive redirect chains | Wasted crawl budget, delayed indexing | Legacy URL migrations without cleanup |

| Low LCP (above 2.5 seconds) | Poor user experience, ranking penalty | Unoptimized images, render-blocking scripts |

| High CLS (above 0.1) | Negative user perception, lower engagement | Layout shifts from late-loading ads or fonts |

2. Establish a Crawl Budget Strategy and Sitemap Governance

Crawl budget is a finite resource allocated by Google to your site. Wasting it on low-value pages means high-value content may remain undiscovered or under-refreshed. A sophisticated agency will analyze your server logs to understand how Googlebot actually behaves, not just how it theoretically should.

Actionable steps for your brief:

- Request a log file analysis (if you have access) or ask the agency to use Google Search Console’s crawl stats report

- Define which URL patterns should be prioritized (e.g., product pages, category hubs, cornerstone content)

- Ensure the XML sitemap is dynamic, includes only canonical URLs, and is updated whenever new content is published

- Configure robots.txt to allow crawling of CSS, JS, and image files while blocking thin affiliate pages or session-based URLs

3. Integrate Core Web Vitals into the Performance Baseline

Core Web Vitals are not optional metrics; they are part of Google’s ranking system. However, chasing perfect scores without understanding the underlying user experience is counterproductive. An expert agency will correlate lab data (from Lighthouse) with field data (from CrUX) to identify real-world bottlenecks.

Checklist for briefing performance optimization:

- Ask for baseline measurements of LCP, CLS, and INP using both lab and field data

- Prioritize fixes based on the proportion of users affected (e.g., 70% of visitors experiencing poor LCP)

- Avoid “quick fixes” like lazy-loading hero images or compressing all images to WebP without quality checks

- Implement a performance budget for new pages (e.g., LCP under 2.0 seconds, CLS under 0.05)

- Set up monitoring with real user monitoring (RUM) tools to track changes over time

4. On-Page Optimization and Intent Mapping: Beyond Keyword Stuffing

On-page optimization has evolved from meta tag gymnastics to a discipline of intent mapping and semantic relevance. An agency that still focuses on keyword density or exact-match anchor text is operating with outdated methods. The correct approach aligns each page with a specific search intent—informational, navigational, commercial, or transactional.

How to brief on-page work:

- Provide the agency with your existing keyword research and ask them to categorize keywords by intent

- Request a content gap analysis that compares your site’s topical coverage against competitors

- Ensure title tags and meta descriptions are written for click-through rate, not just keyword inclusion

- Implement structured data (schema.org) for relevant content types: articles, products, FAQs, local business

| Search Query | Intent | Recommended Page Type | Key On-Page Elements |

|---|---|---|---|

| “best running shoes for flat feet” | Commercial investigation | Comparison guide or listicle | Product comparisons, user reviews, schema for FAQ |

| “buy Nike Air Zoom Pegasus 40” | Transactional | Product detail page | Clear CTA, pricing, stock availability, reviews |

| “how to clean running shoes” | Informational | Blog post or video | Step-by-step instructions, embedded video, internal links to product pages |

| “Nike store near me” | Navigational | Location page or Google Business Profile | Address, hours, map, local schema |

Common pitfall: Creating separate pages for every slight variation of a keyword (e.g., “SEO services,” “SEO agency,” “SEO company”) without consolidating intent. This creates duplicate content and dilutes link equity. An expert agency will recommend canonicalization or 301 redirects to consolidate similar pages.

5. Link Building and Backlink Profile Management: Quality Over Volume

Link building remains a high-risk, high-reward activity. Black-hat tactics—private blog networks (PBNs), paid links, automated directory submissions—can produce short-term gains but almost always lead to manual penalties or algorithmic devaluation. A reputable agency will focus on earned links through content-driven outreach and digital PR.

What to include in your link-building brief:

- Define acceptable link sources: reputable industry publications, .edu and .gov domains (when relevant), niche-specific blogs with editorial oversight

- Reject any proposal that involves link exchanges, paid placements, or “guaranteed DA 50+ links”

- Request a backlink profile audit using tools like Ahrefs, Majestic, or Semrush to identify toxic links

- Establish a disavow strategy for spammy links, but only after confirming they are genuinely harmful (Google’s John Mueller has noted that most sites do not need disavow files)

| Approach | Risk Level | Typical Time to Impact | Sustainability |

|---|---|---|---|

| Guest posting on relevant sites | Low to medium | 3–6 months | High, if content is valuable |

| Digital PR (data-driven newsjacking) | Low | 1–3 months | High, but requires resources |

| Broken link building | Low | 2–4 months | Medium, depends on scale |

| PBNs or paid links | Very high | Immediate (short-term) | Very low, penalty risk high |

| Forum/spam comments | High | None or negative | Negligible |

Red flag: If an agency promises to increase your Domain Authority (DA) or Trust Flow (TF) by a specific number within a fixed period, treat that as a warning. DA and TF are third-party metrics, not Google ranking factors. They can be manipulated, and focusing on them often leads to low-quality link acquisition.

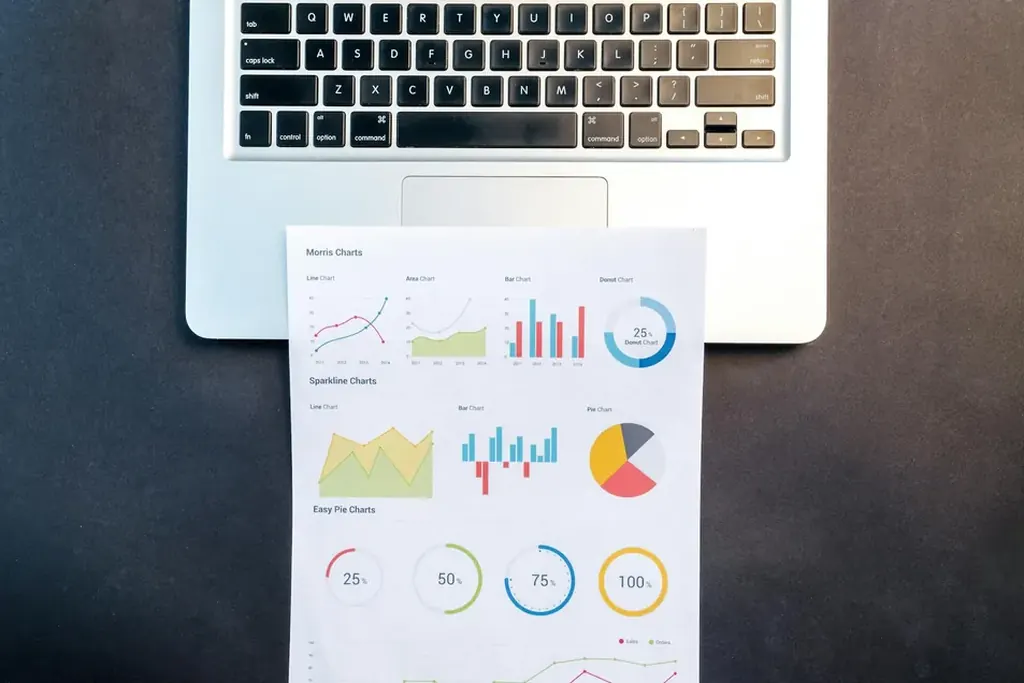

6. Reporting and Continuous Improvement: Moving Beyond Vanity Metrics

A final, critical component of your agency brief is the reporting framework. Many agencies provide dashboards that show rankings, traffic, and backlinks without context. These are vanity metrics unless they are tied to business outcomes. Your reporting should answer three questions: Are we attracting the right visitors? Are they engaging meaningfully? Are they converting?

What to demand in reports:

- Organic traffic segmented by landing page and search intent (not just total sessions)

- Conversion tracking for goal completions (purchases, form fills, phone calls)

- Crawl budget efficiency: number of pages indexed vs. crawled per week

- Core Web Vitals improvement over time, with field data from CrUX

- Backlink quality analysis: ratio of dofollow to nofollow, domain diversity, topical relevance

Further reading: Technical SEO and Site Health | Core Web Vitals Optimization Guide | How to Run an Effective SEO Audit | Link Building Best Practices | On-Page Optimization Checklist

Reader Comments (0)