Expert SEO Agency Services: Technical Audits, Content Strategy & Site Performance Optimization

The gap between a website that generates predictable organic revenue and one that languishes on page five of search results is rarely a matter of luck. It is the product of systematic technical health, strategic content alignment, and sustained performance optimization. Many organizations invest heavily in paid acquisition while their organic channels remain underleveraged, often because they lack the specialized expertise to diagnose and resolve the layered issues that suppress visibility. An expert SEO agency does not simply apply a checklist of on-page tweaks; it conducts a forensic examination of the site’s architecture, crawlability, content ecosystem, and backlink profile to identify the specific bottlenecks that prevent search engines from recognizing and rewarding the site’s authority. This article dissects the core pillars of professional SEO services—technical audits, content strategy, and site performance optimization—and explains how each component interacts to produce measurable, defensible organic growth.

The Technical SEO Audit: Diagnosing the Foundation

A technical SEO audit is the diagnostic equivalent of a full-body scan for a website. It examines the underlying infrastructure that determines whether search engine crawlers can efficiently discover, index, and understand a site’s content. Without a clean technical foundation, even the most compelling content and the strongest backlink profile will fail to deliver results because search engines cannot properly evaluate the site’s value.

The audit begins with an analysis of crawl budget—the finite number of pages a search engine will crawl on a given site within a specific timeframe. For large sites with thousands of URLs, inefficient crawl allocation can be problematic. If a search engine wastes its crawl allowance on thin pages, duplicate content, or redirect chains, it may never reach the site’s most valuable pages. An expert agency identifies these inefficiencies by examining server logs, analyzing crawl patterns, and correlating them with indexation data. Common issues include excessive parameterized URLs, orphaned pages that lack internal links, and bloated XML sitemaps that include low-value URLs.

robots.txt files are another frequent source of technical debt. A single misplaced disallow directive can block entire sections of a site from being crawled, including content that is critical for ranking. Conversely, failing to block low-value areas—such as admin panels or staging environments—can waste crawl budget and expose internal data. The audit verifies that the robots.txt file is both permissive enough to allow access to key content and restrictive enough to exclude non-public resources.

Canonical tags serve as the authoritative signal for resolving duplicate content issues. When multiple URLs contain substantially similar content—for example, product pages accessible through multiple category paths or session IDs—search engines may split ranking signals across the duplicates, diluting the authority of any single version. Proper canonicalization consolidates these signals onto the preferred URL. An audit maps the entire site’s canonical strategy, identifying instances where canonicals are missing, contradictory, or pointing to non-indexable pages.

Core Web Vitals and Site Performance: Beyond Page Speed

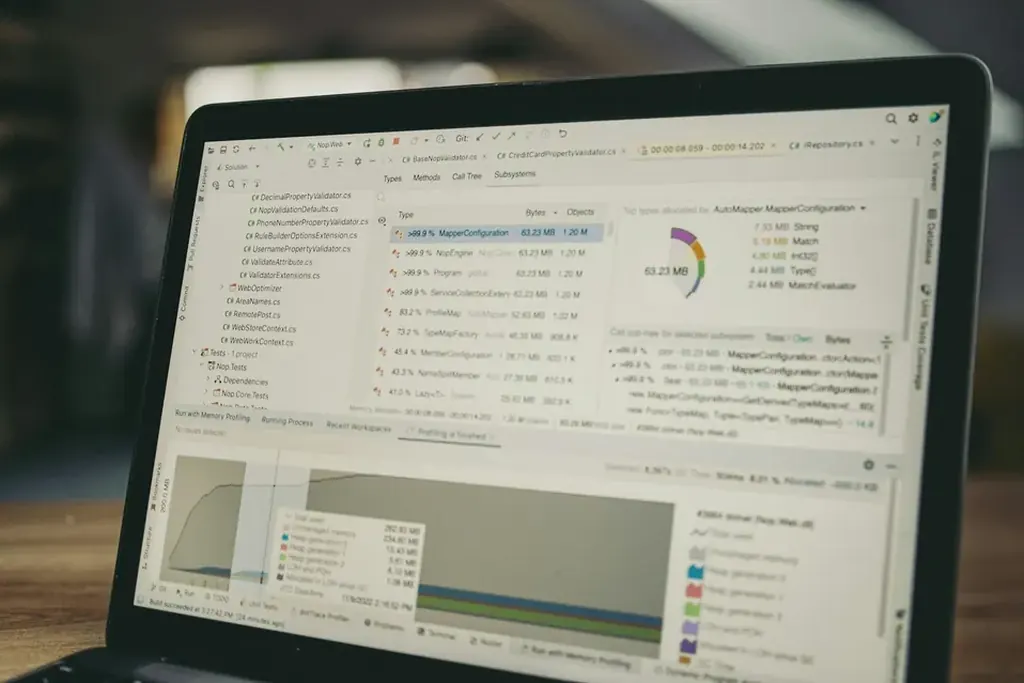

Site performance optimization has evolved from a user experience consideration into a direct ranking factor through Google’s Core Web Vitals metrics: Largest Contentful Paint (LCP), First Input Delay (FID) or Interaction to Next Paint (INP), and Cumulative Layout Shift (CLS). These metrics measure loading performance, interactivity, and visual stability, respectively. An expert agency approaches performance optimization not as a one-time speed boost but as a continuous process of measurement, diagnosis, and remediation.

LCP optimization typically requires addressing server response times, render-blocking resources, and image delivery. Many sites struggle with LCP because their largest element—often a hero image or a large text block—loads slowly due to uncompressed assets or inefficient caching policies. The agency implements solutions such as lazy loading for below-the-fold images, preloading critical resources, and leveraging content delivery networks to reduce latency.

INP measures a page’s responsiveness to user interactions. High INP values often stem from long-running JavaScript tasks that block the main thread. This is particularly common on sites with complex third-party scripts, analytics tags, or chat widgets. The optimization process involves auditing script execution order, deferring non-critical JavaScript, and using web workers to offload heavy computations.

CLS quantifies unexpected layout shifts that occur when elements move after the page has already rendered. Common culprits include images and ads without explicit dimensions, dynamically injected content, and web fonts that cause layout recalculation. The agency ensures that all media elements have defined width and height attributes, that ad slots reserve space, and that font loading does not cause visible reflow.

Crawlability and Indexation: Ensuring Search Engines Can Find Your Content

Even a perfectly optimized page is of limited value if search engines cannot find it. Crawlability refers to the ability of search engine bots to access and navigate a site’s content, while indexation determines which of those crawled pages are stored in the search engine’s index and are eligible to appear in search results.

The XML sitemap is the primary tool for communicating a site’s structure to search engines. However, many sitemaps contain errors that undermine their effectiveness: outdated URLs, broken links, or inclusion of noindex pages. An expert agency generates a dynamic sitemap that automatically updates as content is added or removed, prioritizes high-value pages, and excludes low-quality or thin content. The sitemap is then submitted to Google Search Console and monitored for indexation errors.

Internal linking architecture plays an equally critical role in crawlability. A site with a flat hierarchy—where every page is within a few clicks of the homepage—enables bots to discover content efficiently. Conversely, deep silos with excessive nesting can leave important pages stranded. The agency conducts a link graph analysis to identify pages that receive no internal links, then implements a strategic internal linking plan that distributes link equity and guides crawlers to priority content.

Duplicate content detection extends beyond canonical tags. Parameter-heavy URLs, session IDs, and printer-friendly versions can all create multiple indexable versions of the same content. The audit uses tools to identify clusters of near-identical pages and implements solutions such as 301 redirects, canonical tags, or meta robots noindex directives as appropriate. The goal is to ensure that every indexable URL offers unique value and that ranking signals are not fragmented.

On-Page Optimization and Content Strategy: Aligning Content with Search Intent

On-page optimization is often misunderstood as a mechanical exercise of inserting keywords into titles and meta descriptions. In practice, it is a strategic discipline that requires understanding the relationship between search queries, user intent, and content structure. An expert agency begins with keyword research that goes beyond search volume to analyze the intent behind each query.

Intent mapping categorizes keywords into informational, navigational, commercial, and transactional buckets. A page targeting a transactional query—such as “buy enterprise SEO software”—must include product comparisons, pricing, and a clear call to action. A page targeting an informational query—such as “how to conduct an SEO audit”—should provide a comprehensive guide with step-by-step instructions. When intent and content are misaligned, bounce rates may increase, engagement metrics may decline, and search engines may eventually demote the page.

The content strategy phase involves creating an editorial plan that addresses the full spectrum of the target audience’s needs. This includes pillar pages that cover broad topics in depth, cluster content that addresses specific subtopics, and supporting assets such as case studies, whitepapers, and FAQs. The agency maps each piece of content to specific keywords and ensures that internal links connect related content to build topical authority.

On-page optimization extends to the technical implementation of content. Title tags should be unique, descriptive, and within the recommended length. Meta descriptions should summarize the page’s value proposition and include a relevant call to action. Header tags (H1, H2, H3) should structure the content logically and include target keywords where natural. Image alt text should describe the image content while incorporating relevant keywords. These elements may seem basic, but audits consistently reveal sites where title tags are duplicated, meta descriptions are missing, or header tags are used for styling rather than structure.

Link Building and Backlink Profile Management: Building Authority Sustainably

A site’s backlink profile is one of the strongest signals of authority to search engines. However, not all links are equal. A single link from a highly authoritative, relevant site can carry more weight than dozens of links from low-quality directories or spammy forums. An expert agency focuses on quality over quantity, building links through strategies that are sustainable and compliant with search engine guidelines.

Link building begins with an audit of the existing backlink profile using metrics such as Domain Authority and Trust Flow. The agency identifies toxic links—those from spammy sites, link farms, or penalized domains—and disavows them to prevent algorithmic penalties. It then develops a link acquisition strategy based on the site’s niche and target audience. Common approaches include guest posting on authoritative industry blogs, creating shareable data-driven content, building relationships with journalists and influencers, and reclaiming broken links that point to competitor content.

Trust Flow specifically measures the quality of links based on how close they are to trusted seed sites. A high Trust Flow relative to Citation Flow indicates a clean, authoritative link profile. When the opposite is true—a high Citation Flow but low Trust Flow—it suggests that the site has many links from low-quality sources, which can trigger spam filters. The agency monitors these metrics regularly and adjusts the link building strategy to maintain a healthy ratio.

Risk Management and Algorithm Resilience

SEO is inherently risky because the search landscape is dynamic. Algorithm updates, competitor actions, and changes in user behavior can all impact rankings. An expert agency does not promise immunity from these factors; instead, it builds resilience into the SEO strategy through diversification and continuous monitoring.

The primary risk is over-reliance on any single ranking factor. A site that achieves high rankings primarily through backlinks is vulnerable to a link-related algorithm update. A site that relies on thin content is vulnerable to content quality updates. The agency mitigates these risks by maintaining a balanced approach that addresses technical health, content quality, user experience, and authority building simultaneously.

Another significant risk is the accumulation of technical debt over time. As sites grow, they often accumulate legacy issues such as outdated redirects, orphaned pages, and bloated databases. Regular technical audits—conducted at least quarterly—identify and resolve these issues before they compound. The agency also implements monitoring systems that alert the team to sudden drops in crawl rate, spikes in 404 errors, or changes in indexation status.

Measuring Success: Beyond Rankings

While rankings are a visible indicator of SEO performance, they are not the only metric that matters. An expert agency tracks a comprehensive set of key performance indicators that reflect the health of the organic channel:

- Organic traffic growth by segment (branded vs. non-branded, informational vs. transactional)

- Indexation rate (percentage of submitted URLs that are indexed)

- Crawl efficiency (pages crawled per crawl budget unit)

- Core Web Vitals pass rate (percentage of pages passing all thresholds)

- Backlink profile health (ratio of Trust Flow to Citation Flow, number of toxic links)

- Conversion rate from organic traffic (leads, sales, or other defined actions)

The Path Forward: Operationalizing SEO

SEO is not a project with a defined end date. It is an ongoing operational discipline that requires continuous investment in technical maintenance, content creation, and relationship building. An expert agency provides the expertise, tools, and processes to manage this discipline at scale, but the ultimate success depends on the client’s willingness to implement recommendations and allocate resources.

The most effective partnerships are those where the agency functions as an extension of the client’s team, collaborating on content calendars, coordinating with developers on technical fixes, and aligning SEO goals with broader business objectives. The agency provides the roadmap; the client provides the execution capacity. Together, they build a sustainable organic channel that generates predictable, compounding returns over time.

Before engaging any SEO agency, verify their track record through case studies and client references. Ask for a sample audit that demonstrates their approach to technical analysis. Ensure that their methodology aligns with search engine guidelines and that they do not promise outcomes that are outside their control. The right agency will be transparent about what is achievable, what is uncertain, and what it will take to get there.

Reader Comments (0)